Compare AI coding agents and choose the best AI agent for coding based on workflow, risk, and quality. See how Abstracta Intelligence helps govern AI-assisted development.

Every few months, engineering teams get a new answer to the same search: what is the best AI agent for coding?

The names change quickly. Claude Code, Cursor, GitHub Copilot, Codex, Devin, and other agents keep adding new capabilities. Some can read repositories, edit files, generate tests, run commands, and open pull requests.

GitHub Copilot cloud agent can research a repository, create an implementation plan, make iterative code changes on a branch, let developers review the diff and iterate, and create a pull request when ready. Anthropic describes Claude Code as an agentic coding tool that reads codebases, edits files, runs commands, and integrates with development tools.

OpenAI describes Codex as an AI agent that helps developers write, review, and ship code. Codex can work with code in GitHub repositories and create pull requests from its work. Those capabilities matter. They are changing how development work gets assigned, executed, reviewed, and measured.

But in real-world conversations, the most useful pattern is not about the tool, and the real advantage is not choosing an AI coding agent once. AI coding agents are only as useful as the context around them.

🔴A weak ticket becomes a weak prompt.

🔴A flaky test suite becomes a weak feedback loop.

🔴An unclear business rule becomes generated code that looks right until production proves otherwise.

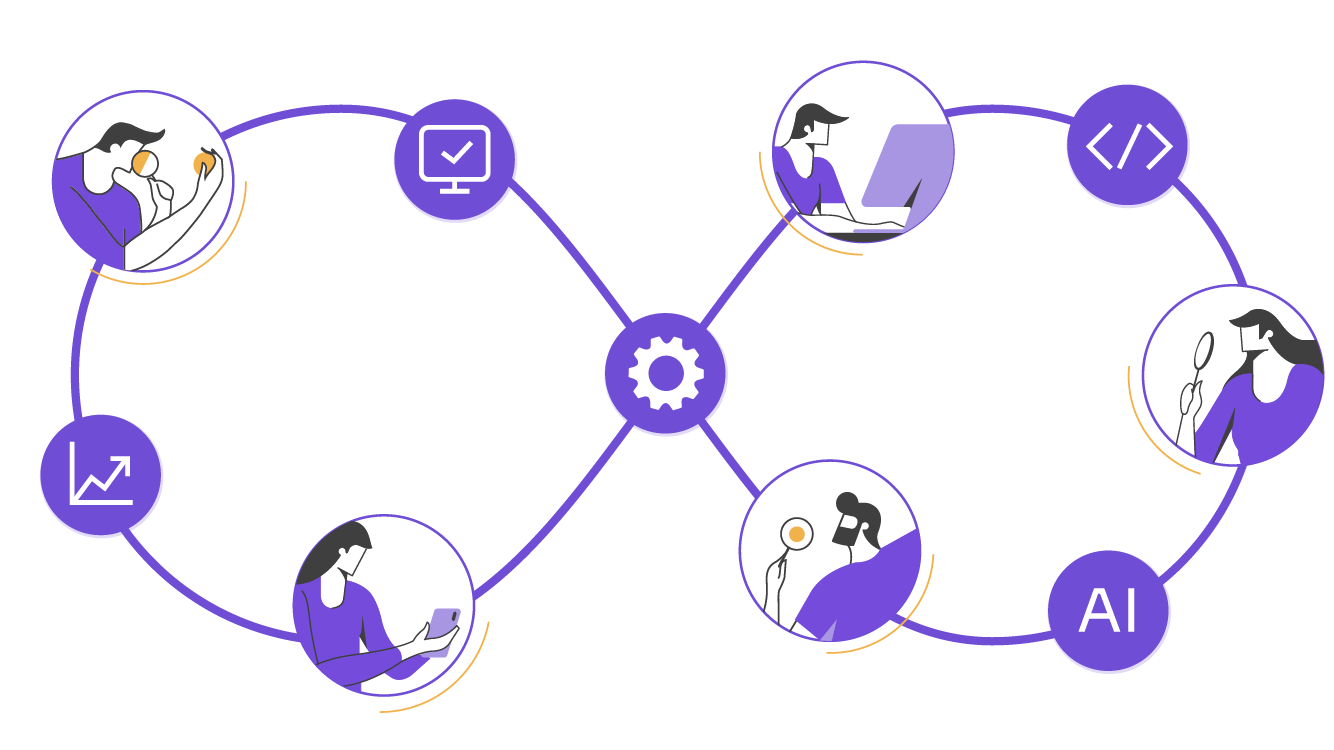

For teams building complex software, the advantage comes from building Quality Intelligence, meaning the ability to connect code, tests, logs, metrics, context, and human expertise into actionable insight.

Need stronger quality control for AI-assisted development?

Abstracta helps enterprise teams apply Quality Intelligence across complex software systems, so AI coding agents support delivery with better context, governance, and human review.

Book a meeting to explore our AI-powered quality engineering solutions.

So, What Is the Best AI Agent for Coding?

For many teams, the shortlist will include tools such as Claude Code, Cursor, GitHub Copilot, Codex, and Devin. Each one fits a different workflow.

Claude Code is strong for agentic work inside a developer’s environment. GitHub Copilot fits teams already working deeply inside GitHub. Codex supports cloud-based software engineering tasks connected to repositories. Devin positions itself as an AI coding agent for serious engineering teams, including complex, multi-repo projects.

Tool choice affects cost, security, developer experience, compliance, and integration with the existing stack. Still, engineering leaders need another layer of evaluation.

The best AI coding agent for a team is the one that can work inside its delivery system without creating unmanaged risk.

That requires clear requirements, reliable tests, coding standards, CI/CD checks, security controls, production context, and human review.

✔️A powerful agent in a weak delivery system creates faster uncertainty.

✔️A capable agent in a mature quality system can help teams move faster with more control.

How AI Coding Agents Differ in Practice

Most searches for the best AI agent for coding mix several categories into one list. That creates confusion. An AI coding assistant, an AI IDE, a terminal-first coding agent, a background coding agent, and a quality-aware delivery agent don’t solve the same problem.

A developer asking for help with a single line of code has a different need than an enterprise team asking agents to handle multi-file changes, generate tests, work with infrastructure code, and open pull requests across existing workflows.

A practical comparison starts with the work pattern.

| Category | Examples | Best fit | Main trade-off |

|---|---|---|---|

| AI coding assistants | GitHub Copilot for IDE assistance, JetBrains AI Assistant, Gemini Code Assist | Individual developers, autocomplete, single-line suggestions, generated documentation, IDE support | Low friction, but limited control over larger delivery risk |

| Terminal-first coding agents | Claude Code, Gemini CLI, Codex CLI, Kilo Code | Power users, terminal workflows, explicit control, MCP support, longer tasks | Powerful for experienced developers, but depends heavily on setup, permissions, and review discipline |

| Background and cloud agents | GitHub Copilot cloud agent, Codex cloud workflows, Devin and similar background agents | Parallel agents, complex tasks, pull requests, enterprise teams, background work | Useful for scale, but governance, security, permissions, and cost transparency matter more |

| Quality-aware delivery agents | Tero and agents built with Abstracta Intelligence | Test generation, requirements analysis, code quality signals, release readiness, business context | Works best when connected to real delivery workflows, quality strategy, and human expertise |

Enterprise Teams Need One More Layer: Quality Governance

Quality-aware delivery agents help connect AI-assisted development with requirements, tests, code quality signals, release readiness, and business context. Abstracta Intelligence, built on Tero, gives enterprise teams a way to turn agent output into evidence for better delivery decisions.

The right tool should fit the workflow. The right operating model should protect the software.

Run a Pilot with Abstracta Intelligence

The Search Starts With Tools. The Risk Starts in the Workflow.

The phrase AI tool for coding usually points to a broad category: autocomplete, code generation, chat inside the IDE, refactoring help, documentation support, and debugging assistance. The phrase AI agent for coding carries a different expectation.

An agent does more than suggest code. It may plan a task, inspect files, modify several parts of a codebase, run commands, generate tests, interact with tools, and create a pull request.

That shift changes the risk profile.

- A coding assistant helps a developer complete a task.

- A coding agent starts touching the delivery workflow.

It may change code that affects business rules, integrations, user permissions, audit behavior, performance, data handling, or production reliability.

The harder problem appears once the agent starts working on real systems.

Can the team tell whether the output is correct?

Can reviewers understand the assumptions behind the change?

Can tests detect meaningful regression?

Can leaders see whether AI adoption improves delivery or increases hidden rework?

Those questions matter in every software organization. They become urgent in fintech, banking, healthcare, legal tech, retail, and any environment where software quality affects revenue, risk, or customer trust.

The Quality Intelligence Gap Behind AI Coding Agents

AI coding agents can produce work faster than many teams can evaluate it.

That gap shows up in daily delivery:

- Pull requests arrive with generated tests, but nobody knows whether they test the right behavior.

- User stories become prompts, but the acceptance criteria are incomplete.

- CI passes, but the suite misses the risk-heavy paths.

- Developers spend less time typing and more time reviewing assumptions.

- Leaders see more activity, but release confidence does not improve.

We call this the Quality Intelligence Gap.

It’s the distance between what AI can produce and what teams need to understand before making safe, value-aligned software delivery decisions.

Abstracta Intelligence was built around that gap. It is a platform that connects systems, context, and people to help teams close the Quality Intelligence Gap across software delivery.

That framing matters because AI-assisted development affects more than productivity. It is also:

A quality story.

A governance story.

A delivery story.

When AI agents enter the workflow, teams need a stronger way to connect generated output with requirements, tests, risks, evidence, and human judgment.

AI Agents Expose the Discipline a Team Already Has

Some engineering practices have been around for years: BDD, TDD, test automation, contract testing, peer review, CI/CD, small batch delivery, and risk-based testing.

They can sound old compared with agentic AI. But, in practice, AI agents make them more valuable.

- BDD gives teams a shared language for behavior.

- TDD gives developers and agents a fast signal before implementation grows too large.

- Automated tests give reviewers evidence.

- CI/CD creates a controlled path from change to validation.

- Risk-based testing helps teams focus attention where failure carries higher cost.

The 2025 DORA report describes a more current version of this tension. AI adoption is now associated with higher software delivery throughput and stronger product performance, but it still has a negative relationship with software delivery stability. DORA frames AI as an amplifier: it can strengthen teams with clear workflows, mature version control, strong automated testing, and fast feedback loops, while exposing weaknesses in teams with fragmented tooling, siloed data, or fragile infrastructure.

That matches what many teams see in practice:

✔️AI can help people produce more code.

✔️More code doesn’t automatically become better software.

A Coding Agent Needs More Than a Repo

A repository gives an AI coding agent files, dependencies, and structure, but real delivery work needs more context.

A useful agent handoff answers practical questions:

- What behavior should change?

- What behavior must stay stable?

- Which examples define success?

- Which tests count as evidence?

- Which risks need human review?

- Which systems provide context?

- Which changes require traceability?

Without that contract, the agent fills in the blanks.

Sometimes it fills them well. Sometimes it chooses an implementation that passes a narrow test and still violates a business rule. Sometimes it adds a workaround that increases technical debt. Sometimes it writes tests that validate its own assumption instead of the product behavior.

This problem is common in complex systems.

A banking platform may contain rules spread across services, batch processes, audit logs, legacy systems, and compliance procedures.

A fintech platform may combine payment flows, fraud prevention rules, identity verification, transaction limits, third-party integrations, and regulatory requirements.

A legal tech platform may depend on jurisdiction, document type, access control, and confidentiality rules.

The codebase matters, but the surrounding knowledge matters as much.

An AI Coding Agent Readiness Model

Before adopting another AI coding agent, teams should assess the system around it.

1. Requirement Readiness

AI coding agents work better when the intent is clear. A ready team can describe the user goal, business rule, acceptance criteria, constraints, edge cases, data conditions, expected tests, and affected systems.

2. Example Readiness

Examples turn product intent into usable context. BDD scenarios, acceptance tests, API examples, production incidents, and support cases help agents understand normal flows, failure flows, boundary conditions, and compliance-sensitive behavior.

3. Test Readiness

A ready team has fast, meaningful tests across the right layers: unit, integration, contract, end-to-end, performance, security, and accessibility. Coverage matters, but useful regression detection matters more.

4. Context Readiness

AI agents need project context: architecture decisions, domain vocabulary, coding standards, logs, known incidents, deployment constraints, and team-specific rules. Context helps, but teams still need judgment to decide what matters.

5. Governance Readiness

AI-generated work needs clear rules: what agents can change, what they cannot change, who reviews high-risk changes, how generated tests are evaluated, how activity is logged, and when human approval is required.

6. Human Review Readiness

AI agents change code review. Reviewers need to check intent, assumptions, risk, and system impact. The key questions are: does the change match the business rule, are the tests meaningful, and is the pull request small enough to review well?

Human judgment remains central.

Case Study: Safer ACH Payment Retries

Challenge

A US fintech team wanted to improve how its platform handled failed ACH payments. The request looked simple: retry failed payments automatically. But the team had more than 10 ACH return scenarios across insufficient funds, invalid account data, authorization issues, processor constraints, and operations review.

Before the change, too many cases depended on manual interpretation. Payment exceptions took up to 2 business days to review, and edge cases were difficult to trace across tickets, logs, and test evidence.

An AI coding agent could help update the service, add tests, and open a pull request. The risk was whether the generated logic matched the real payment behavior.

Solution

Abstracta Intelligence, built on Tero, created a quality layer around the workflow. Tero-powered agents helped connect business rules, ACH return scenarios, test data, processor constraints, logs, and delivery signals before the code moved forward.

The team moved from a generic ticket (“retry failed payments”) to a clearer model with 12 documented payment scenarios, expected system behavior, test coverage expectations, and review criteria for financial risk, customer communication, and auditability.

Results

The coding agent helped produce the implementation faster. Abstracta Intelligence helped the team evaluate whether the change was safe to release.

The team increased automated coverage for retry scenarios from roughly 45% to 85%, reduced manual review time for payment exceptions from 2 business days to same-day analysis, and improved traceability across requirements, tests, logs, and pull request evidence.

The result was AI-assisted delivery with stronger control: faster implementation, clearer review, fewer ambiguous edge cases, and better evidence for release decisions.

Need to Know If Your Team Is Ready for AI Coding Agents?

Abstracta helps engineering and QA leaders assess the quality system around AI-assisted development. We look at requirements, examples, test automation, CI/CD signals, governance, team workflows, and delivery risks to identify where AI agents can create value without adding hidden risk.

Through Abstracta Intelligence and Tero, we help teams move from scattered AI experimentation to governed, measurable AI adoption in software delivery.

FAQs about AI Agents for Coding

What Is the Best AI Agent for Coding?

The best AI agent for coding depends on the team’s workflow, codebase, and delivery risk. GitHub Copilot, Claude Code, Cursor, Codex, Devin, Windsurf, Replit Agent, and other AI coding tools fit different needs. The best AI coding agent is the one that supports existing workflows, multi-file changes, code quality, and human review.

What Are AI Coding Agents?

AI coding agents are AI tools that can help plan, write, edit, test, and review code across a software development workflow. Unlike basic coding assistants, they can often inspect repositories, understand project context, make multi-file changes, run commands or tests, and support pull request workflows. Some AI coding agents can behave like an autonomous agent on well-defined tasks, but strong results still depend on clear requirements, reliable tests, governance, and human review.

Which AI Coding Agents Should Teams Compare?

Teams comparing AI coding agents should evaluate how each tool supports real software development workflows, project context, multi-file changes, code quality, cost transparency, and human review.

- GitHub Copilot is one of the most widely used AI coding tools and is strong for editor-based workflows, code suggestions, IDE support, and GitHub-based pull request workflows. Stack Overflow’s 2025 Developer Survey describes ChatGPT and GitHub Copilot as the clear market leaders for out-of-the-box AI assistance.

- Claude Code is an agentic coding tool for working across existing codebases. It can read codebases, edit files, run commands, and integrate with development tools, which makes it relevant for complex engineering tasks and deeper architectural reasoning.

- Cursor supports AI-native IDE workflows with project context, Agent mode, Rules, Skills, MCP servers, CLI, and Teams & Enterprise setup. It is useful for developers who want in-editor workflows, project-aware assistance, and multi-file review capabilities.

- Windsurf includes Cascade, an agentic AI assistant with Code and Chat modes, tool calling, checkpoints, real-time awareness, and codebase context. Windsurf also documents inline and multi-line autocomplete based on code context.

- Replit Agent can work well for beginners or rapid prototyping because Replit lets users build, run, and publish apps from the browser, which reduces local setup and deployment friction.

- Codex is relevant for teams evaluating cloud and background coding workflows connected to repositories, pull requests, and parallel agent tasks.

- Gemini CLI is relevant for terminal workflows that need explicit control, built-in tools, MCP support, and help with tasks such as fixing bugs, creating features, and improving test coverage.

- Augment Code and Kilo Code are also worth comparing when teams need alternative AI coding agents for existing code, file edits, agent workflows, or IDE/CLI-based development.

Enterprise teams should compare each tool by code quality, multi-file changes, test generation, pull request workflows, security, governance, API costs, enterprise pricing, and human review.

What Are the Best AI Coding Agents for Enterprise Teams?

The best AI coding agents for enterprise teams are tools that support existing code, multi-file changes, pull requests, test generation, infrastructure code, security controls, and governance. Enterprise teams should evaluate AI agents based on workflow fit, code quality, cost transparency, API key handling, enterprise pricing, and review needs. The right tool should maintain consistency with existing patterns, folder structure, and software development standards.

What Is the Difference Between an AI Coding Agent and an AI Coding Assistant?

The difference between an AI coding agent and an AI coding assistant is the scope of work. AI coding assistants usually create minimal friction inside existing tools, helping with autocomplete, single-line suggestions, generated documentation, code explanation, or IDE support. AI coding agents can inspect a codebase, plan tasks, edit files, make multi-file changes, run commands, generate tests, and support pull request workflows. That added autonomy creates stronger code quality and governance needs.

Is Claude Code a Coding Agent?

Claude Code is an agentic coding tool that can read codebases, edit files, run commands, and integrate with development tools. It supports terminal-first workflows, while also being available across IDE, desktop, and browser surfaces. Claude Code is relevant for developers and power users who need deep reasoning, explicit control, and support for complex engineering tasks across existing code.

What Does GitHub Copilot Cloud Agent Do?

GitHub Copilot cloud agent can research a repository, create an implementation plan, make iterative code changes on a branch, let developers review the diff, and create a pull request when ready. It is useful for teams that want background agents inside GitHub workflows. For enterprise teams, it still requires quality gates, security controls, permissions, and human review.

What Is Codex for Software Development?

Codex is OpenAI’s coding agent for software development workflows. Codex can read, edit, and run code, work on tasks in the cloud, and run background tasks in parallel. Codex can also work with GitHub pull requests and review pull request context. It is relevant for teams comparing cloud agents, background agents, AI code review, and coding tools beyond a single prompt inside an IDE.

What Is Gemini CLI?

Gemini CLI is an open-source AI agent for terminal workflows. Gemini CLI gives developers access to Gemini from the command line and uses built-in tools plus local or remote MCP servers. It can support complex tasks such as fixing bugs, creating new features, and improving test coverage. It is useful for power users who want explicit control and MCP support.

Are Cursor, vs Code Extensions, and AI IDEs the Same as AI Coding Agents?

Cursor, VS Code extensions, and AI IDEs can include agentic capabilities, but they are not the same as AI coding agents. An AI IDE focuses on the development environment: project context, navigation, file edits, in-editor workflows, and rapid prototyping. An AI coding agent focuses on completing coding tasks across files, tools, or repositories. Cursor docs cover Agent mode, Rules, Skills, MCP servers, CLI, and Teams & Enterprise setup.

How Do Pricing Models Affect AI Coding Tools?

Pricing models affect AI coding tools because teams may face free tier limits, seat-based plans, enterprise pricing, usage-based billing, API costs, or model-specific consumption. More capable tools can create cost predictability issues when many developers run longer tasks, deep reasoning, or parallel agents. Enterprise teams should evaluate value per task, cost transparency, API key handling, and limits before scaling usage.

What Should Teams Check Before Choosing an AI Coding Agent?

Teams should check workflow fit, project context, code quality, pricing, API costs, MCP support, security controls, on-premises options, enterprise pricing, and whether the tool uses a capable model for their coding tasks. They should also test output quality, multi-file edits, existing code support, and consistency with existing patterns. MCP support extends the agent across repositories, existing tools, data, and delivery workflows.

Do AI Coding Agents Improve Code Quality?

AI coding agents can improve code quality when they work with clear requirements, reliable tests, business context, and human review. They can also generate code that looks correct but misses security rules, architecture, or business behavior. Strong CI/CD, BDD, TDD, test generation, and quality engineering make AI generated code safer to review and release.

Why Do AI Coding Agents Need Business Context?

AI coding agents need business context because code decisions depend on more than syntax. Business context explains why the task matters, which rules apply, what risks exist, and what evidence reviewers need. Without business context, a coding agent may write code that passes basic checks but fails the real workflow, especially in fintech, banking, healthcare, legal tech, and other high-risk environments.

Can AI Coding Agents Replace QA?

AI coding agents cannot replace QA. They make strong QA and quality engineering more important. As AI agents write code, edit files, generate tests, and open pull requests faster, teams need better examples, environments, risk analysis, review criteria, and release evidence. QA becomes more strategic when AI-assisted development reaches enterprise scale.

What Is Quality Intelligence?

Quality Intelligence is Abstracta’s approach to connecting AI, context, human judgment, and delivery signals across software delivery. It helps teams understand code quality, testing, logs, risks, and business impact across systems instead of relying on isolated test results. For AI-assisted development, Quality Intelligence helps teams evaluate whether agent-generated work is safe, useful, and ready for release.

What Is the Quality Intelligence Gap?

The Quality Intelligence Gap is the distance between what AI agents can produce and what teams need to understand before making safe delivery decisions. The gap appears when AI generated code, tests, documentation, or pull requests move faster than requirements, test coverage, logs, governance, and human review. Enterprise teams feel this gap most when AI adoption grows without enough structure.

How Does Abstracta Intelligence Support AI-Assisted Development?

Abstracta Intelligence supports AI-assisted development by creating a quality layer around real delivery workflows. It connects AI agents, requirements, tests, logs, delivery signals, governance, human expertise, and impact visibility. In Abstracta’s positioning, Abstracta Intelligence is an enterprise AI platform that augments QA and engineering teams, accelerates AI adoption in real workflows, and uses Tero as its agentic framework.

What Is Tero?

Tero is Abstracta’s open-source agentic framework for building context-aware AI agents in QA and software delivery workflows. Tero is the foundation behind Abstracta Intelligence. It helps teams create agents that support requirements analysis, test ideas, log inspection, failure summaries, delivery signals, and release decisions. Tero is not another autocomplete tool; it addresses the quality and delivery workflows around AI-assisted development.

Should Engineering Teams Improve Tests Before Choosing an AI Coding Agent?

Engineering teams do not need perfect tests before choosing an AI coding agent, but weak tests limit the value. Strong test strategy, BDD examples, TDD practices, CI/CD checks, and clear review criteria help agents get better feedback. They also help humans review generated code with more confidence. A coding agent can write code faster; quality engineering helps decide whether that code is correct, safe, and maintainable.

About Abstracta

With nearly 2 decades of experience and a global presence, Abstracta is a technology company that helps organizations deliver high-quality software faster by combining AI-powered quality engineering with deep human expertise.

Our expertise spans across industries and complex delivery environments. That’s why we’ve built robust partnerships with industry leaders, Microsoft, Datadog, Tricentis, Perforce BlazeMeter, Sauce Labs, and PractiTest, to provide the latest in cutting-edge technology.

We invite you to explore our solutions and case studies.

Book a meeting to explore our AI-powered quality engineering solutions.

Follow us on LinkedIn & X to be part of our community!

Recommended for You

API Testing Strategies in Fintech: Real Challenges and Solutions

Natalie Rodgers, Marketing Team Lead at Abstracta

Related Posts

Tips for Using ChatGPT Safely in Your Organization

Is resisting change worth it? At Abstracta, we believe it’s essential to prepare ourselves to make increasingly valuable contributions to our society every day and to do so securely. In this article, we share practical and actionable tips so you can harness the full potential…

Adaptive Software Development: ASD Agile Methodology

When software projects evolve quickly, rigid delivery models create friction. Read this post to build an adaptive software development approach to delivery.

Search

Contents

Categories

- Acceptance testing

- Accessibility Testing

- AI

- API Testing

- Development

- DevOps

- Fintech

- Functional Software Testing

- Healthtech

- Mobile Testing

- Observability Testing

- Partners

- Performance Testing

- Press

- Quallity Engineering

- Security Testing

- Software Quality

- Software Testing

- Test Automation

- Testing Strategy

- Testing Tools

- Work Culture