A banking-focused approach to AI governance: inventory AI use, classify risk, map data flows, and build audit-ready oversight for regulated environments.

Senior banking and fintech leaders are discovering that AI scale is constrained less by AI capabilities and more by leadership visibility into where AI operates, what sensitive data it touches, and who can intervene when outcomes shift.

AI governance strategic visibility is the leadership capability to see where AI operates, what data it handles, who owns it, and how risk management decisions hold up as AI systems scale across workflows.

This matters because once AI moves from pilots to production, the organization inherits operational and compliance exposure at scale. The adoption numbers below show how quickly that shift is already happening.

In a 2025 survey of 400 banks worldwide, 11% reported generative AI already in production and 43% reported active implementation, which signals how fast AI adoption is moving into operational reality.

In the U.S., Bank Director’s 2025 Technology Survey found 62% of bank leaders have experimented with AI, and 66% already have an AI acceptable-use policy.

Regulators are also sharpening expectations. In Canada, OSFI’s 2025 Model Risk Management guideline (E-23) explicitly covers AI and machine learning models and sets lifecycle expectations for federally regulated financial institutions, reinforcing why effective AI governance must be operational, not theoretical.

In Latin America, AI is already in production across banking and fintech workflows; yStats’ 2025 data shows that more than 40% of organizations now face execution and AI-talent gaps as the primary barrier to safe scale. According to the same report, the number of fintech firms in the region expanded by over 300% between 2017 and 2023. However, the report states that “Fintech leadership drives adoption, but scaling remains uneven”.

This highlights how fintech-led adoption has accelerated faster than governance and operational maturity.

This article explains how to operationalize AI governance strategic visibility in banking and fintech using a concrete use case and a repeatable checklist leaders can sponsor across legal, risk, data, and engineering teams.

What “Strategic Visibility” Means in AI Governance

AI governance strategic visibility gives leaders a reliable view of how AI operates across workflows, data handling, decision points, and accountability. It connects business objectives, organizational values, and evolving regulations to day-to-day AI operations.

Leaders need to know where AI use exists, and which AI models and AI technologies run in production, but mainly which risk assessments apply to each system in the AI lifecycle. Strategic visibility supports effective governance through evidence. Audit trails, data quality signals, model drift indicators, and documented human intervention points allow legal and compliance teams to review outcomes with regulatory scrutiny in mind.

If your bank or fintech needs executive visibility across AI adoption, risk management, and regulatory compliance, explore our financial software development services to scope an engagement that fits your AI strategy and your delivery reality.

Key Components of AI Governance Strategic Visibility

- Inventory and discovery: a current map of AI use across onboarding, digital lending, fraud/AML, customer communications, and internal operations, including shadow AI.

- Risk assessment and tiering: proportional controls based on customer impact, sensitive data exposure, reversibility of outcomes, and regulatory scrutiny across jurisdictions.

- Data flow mapping: traceable data handling across prompts, inference, vendor integrations, and core systems, with data integrity and data protection signals.

- Control implementation: oversight mechanisms, audit trails, and human-in-the-loop thresholds tied to high-impact decision points.

- Stakeholder alignment: clear approvals, evidence standards, and escalation paths for legal and compliance teams, risk, data leaders, and executive owners.

Managing AI Risks and Compliance in Banking and Fintech

Managing AI-related risks in banking and fintech depends on evidence that legal and compliance teams can defend. Leaders need a small operating baseline that stays valid as AI systems evolve and scale.

Data governance protects sensitive data and supports regulatory compliance. Proactive risk management in AI addresses bias, security concerns, and unintended consequences. Human-in-the-loop (HITL) protocols define when human review is required for high-stakes AI decisions.

The checklist below summarizes minimum controls teams can implement while keeping delivery momentum:

- Define one accountable owner and one escalation path per AI workflow and high-impact outcome.

- Document sensitive data exposure and data handling controls per workflow.

- Maintain audit trails that capture inputs, outputs, model version, and decision context for AI responses.

- Set human oversight thresholds, including human intervention triggers for high-impact outcomes.

- Run recurring risk assessments across the AI lifecycle, including model drift checks and retraining approvals.

- Validate intellectual property constraints for prompts, training data, and vendor AI solutions.

- Track shadow AI signals by team and tool to prevent unmanaged AI use in production.

This operating baseline shows how teams translate governance policies into control at runtime.

If you want to operationalize this baseline in your bank or fintech, engage Abstracta to scope a focused delivery plan across workflows, evidence, and oversight through our financial software development services.

Why Governance Alone Is No Longer Enough

Many governance frameworks define responsible AI practices at a policy level. Banking and fintech execution requires a governance program that stays accurate as AI systems evolve, integrations change, and AI-driven innovation expands to new workflows.

Board-level attention is rising, yet execution remains uneven. In NACD’s 2025 Private Company Governance Survey, 62% of boards reported setting aside agenda time to learn about AI and its potential impact.

Strategic asset thinking still needs operational visibility to prevent unintended consequences and reputational risks. Here, we want to reinforce the need for traceability and control in higher-risk contexts. Financial services leaders benefit when AI governance policies connect directly to how AI operates in production.

Signals Your Organization Needs AI Strategic Visibility Now

Strategic visibility becomes urgent when AI initiatives spread across product teams and vendors, when legal teams cannot trace AI use across workflows, and when audit trails do not reconstruct why an AI response was approved in a specific context.

Where the Governance Gap Forms in Real Organizations

The governance gap appears when AI capabilities outpace operational ownership. AI assistants appear in product teams, data leaders adopt tools to accelerate analysis, and data scientists integrate models into workflows with limited shared oversight.

The gap also appears when AI initiatives multiply across vendors. SaaS procurement, internal experimentation, and “fast pilots” create parallel AI use without consistent risk management framework decisions, audit trails, or clear accountability.

The gap becomes measurable when AI systems scale. More systems mean more integrations, more sensitive data pathways, more model drift risk, and more dependency on human oversight to resolve edge cases.

Risk-Based Tiering That Works in Banking and Fintech

A risk-based tiering model keeps governance proportional as AI systems evolve. A practical starting point classifies AI workflows into tiers based on customer impact, sensitive data exposure, and reversibility of outcomes. For example, customer-facing generative AI responses often require stricter human oversight and audit trails than internal summarization tools, while fraud detection triage requires stronger drift monitoring and evidence standards.

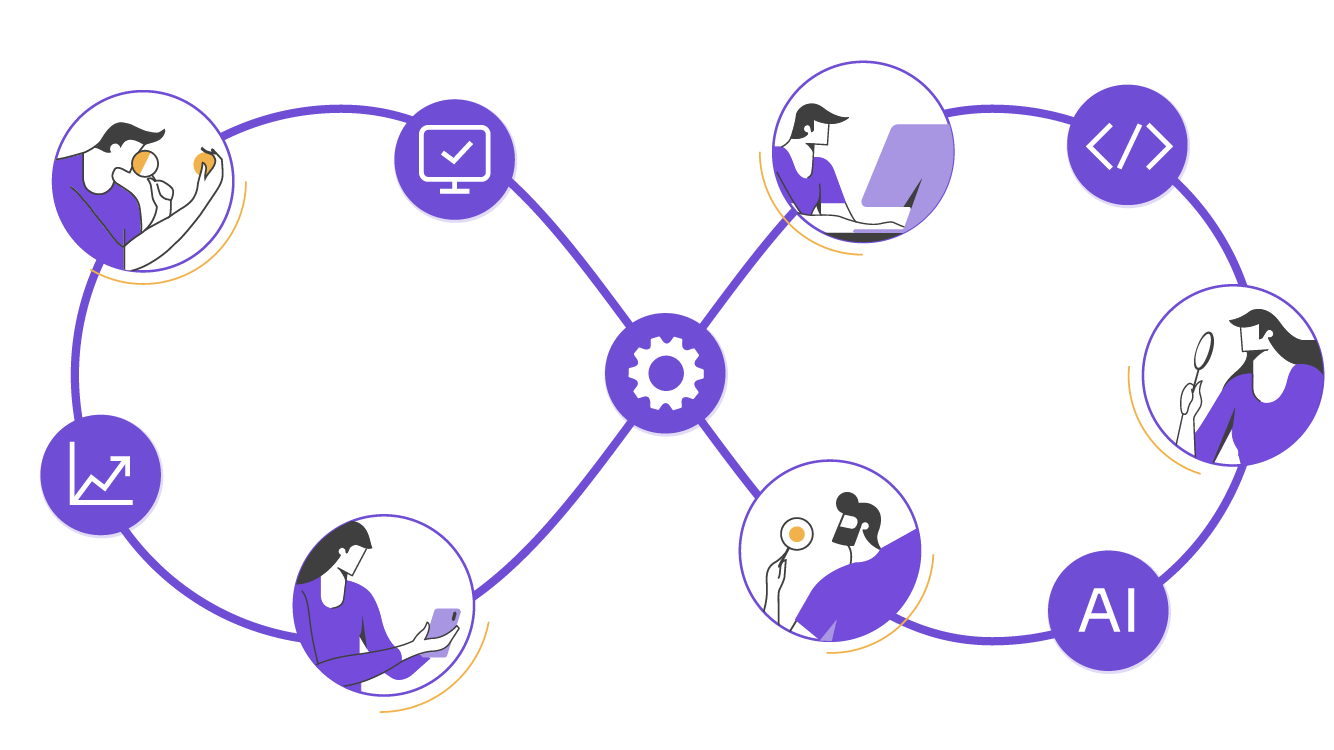

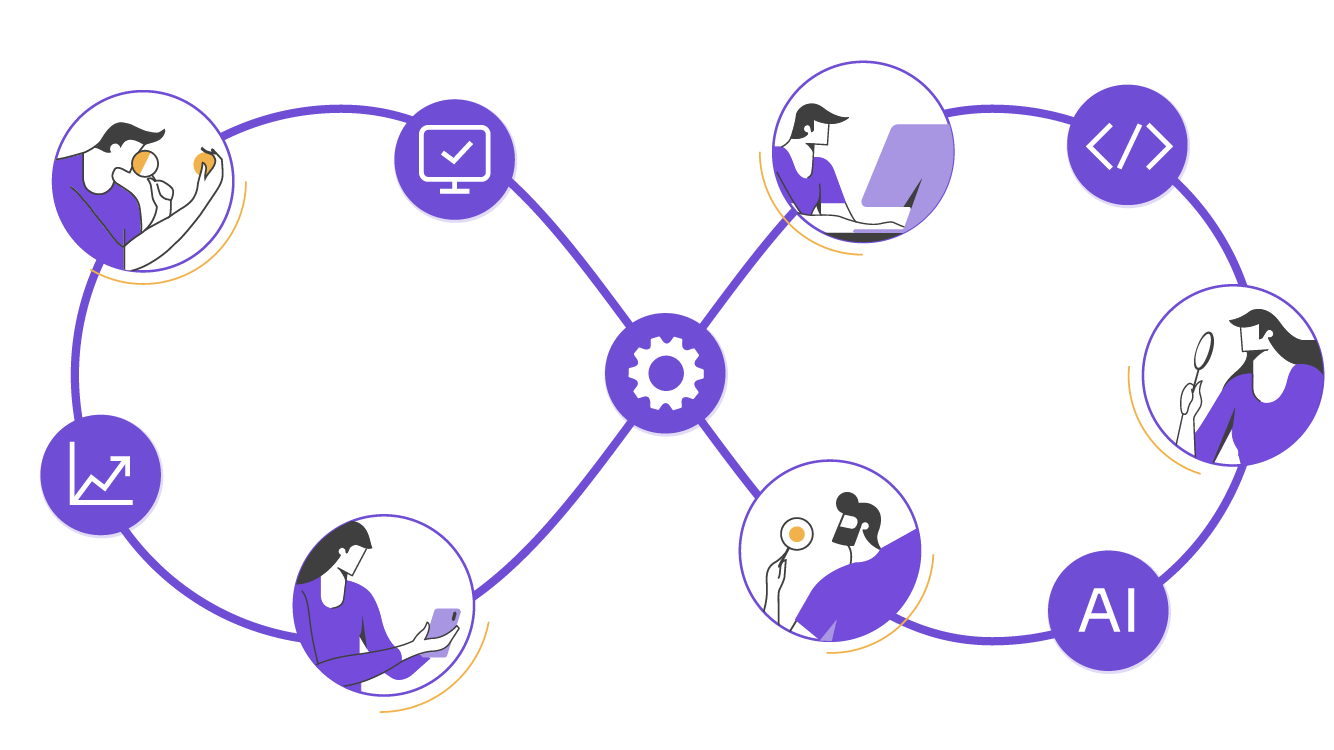

A Practical Model for Effective AI Governance Strategic Visibility

This model focuses on information that leaders can act on. Each element supports responsible innovation while balancing innovation with regulatory compliance and business strategy.

1) Inventory of AI Systems and AI Use

AI Use Case Inventory serves as a centralized database that documents all AI applications, including risk classifications. An inventory links each AI system to its owner, workflow location, and business objectives. It includes internal models, vendor AI solutions, and agentic components such as AI agents used for decision support or execution.

A usable inventory captures data handling notes, whether sensitive data is involved, required oversight mechanisms, and the minimum audit trails needed for regulatory compliance. It also flags shadow AI patterns by channel and team. That visibility helps legal teams and compliance risk owners decide what to approve, replace, or constrain.

2) Data Integrity and Data Protection Map

A data map documents data handling for each AI workflow, including sensitive data exposure and data quality constraints. It also supports data integrity checks that prevent silent degradation in AI-generated answers and AI responses.

This map should be legible to legal and compliance teams. Clear ownership of data protection controls reduces AI related risks during audits and incident response.

3) Oversight Mechanisms and Human Oversight Design

Oversight mechanisms define where humans review, approve, or intervene. Human oversight becomes concrete when teams define thresholds, escalation paths, and human intervention triggers for high-impact outcomes.

This design supports responsible AI governance without slowing delivery. Teams move faster when they know which decisions need review and which can proceed with monitoring.

4) Audit Trails That Survive Real Investigations

Audit trails record inputs, outputs, model version, and decision context for meaningful review. They support effective AI governance when a bank needs to explain how AI influenced a decision, not only what result appeared.

Audit trails also reduce reputational risks. They provide a defensible narrative under regulatory scrutiny and internal corporate governance review.

5) Model Drift and Lifecycle Controls

Model drift monitoring tracks performance changes as data distributions shift. Lifecycle controls define who can update models, how rollbacks occur, and how changes get validated within AI Operations.

These controls matter more as AI systems scale. Stability requires clarity about the AI lifecycle rather than informal change management.

6) Legal, Compliance, and IP Integration

Legal teams and compliance teams need early involvement in governance practices, especially when generative AI touches customer communications or internal decision support. Intellectual property and data licensing constraints require explicit ownership, not assumptions.

This integration also supports responsible AI practices during vendor selection. Contracts, usage terms, and cross-border data protection requirements belong inside the governance program.

Where Tero and Abstracta Intelligence Fit

Some organizations need a practical layer that centralizes AI agents and makes their impact visible to leadership. Tero, our open-source platform for securely building AI agents for QA and Dev, and integrated with existing tools and controlled context, supports that need. How? By providing a dashboard that shows what AI does, where it runs, and how it influences workflows.

This visibility helps leaders govern AI adoption without slowing product teams. It also supports consistent oversight mechanisms by keeping inventory, usage signals, and operational context in one place.

Our solution Abstracta Intelligence complements this approach when you need to translate strategy into implementation. It focuses on designing governed AI-driven solutions that can operate reliably in regulated environments, with traceability aligned to business objectives.

In a Nutshell

AI governance strategic visibility turns AI governance into an operational capability that leaders can measure and act on. It supports responsible innovation, reduces compliance risks, and protects institutional trust as AI-driven systems scale.

In banking and fintech, effective governance depends on clarity about accountability, data integrity, and evidence. Strategic visibility provides clarity across the full AI lifecycle.

FAQs About AI Governance Strategic Visibility

What Is AI Governance Strategic Visibility?

AI governance strategic visibility is the executive view of where AI operates, what data it uses, who owns it, and how oversight works. It supports effective AI governance through evidence.

How Does AI Governance Support Risk Management in Banking?

AI governance supports risk management by linking AI workflows to risk assessments, audit trails, and clear human oversight. This reduces unintended consequences under regulatory scrutiny.

What Creates Shadow AI in Regulated Organizations?

Shadow AI emerges when teams use AI assistants or vendor tools outside approved AI governance policies. This pattern increases data protection and intellectual property exposure.

How Do Audit Trails Reduce Compliance Risks?

Audit trails reduce compliance risks by recording inputs, outputs, context, and model versions for review. This evidence helps legal and compliance teams validate regulatory compliance.

What Should Human Oversight Look Like in AI Systems?

Human oversight in AI systems defines review points, escalation paths, and human intervention triggers tied to outcome severity. It keeps accountability clear when AI systems evolve.

How Do Governance Frameworks Create Competitive Advantage?

Governance frameworks create competitive advantage when they enable responsible AI practices at scale with speed. Strategic visibility helps leaders align AI strategy with business strategy and delivery.

How to Assess Current AI Visibility Across My Organization?

Assess AI visibility by mapping AI workflows, owners, environments, and decision points, then validating what leaders can see in production. Confirm completeness by checking evidence for each workflow: data classes used, approved purpose, and a defined escalation path.

Which Metrics Measure AI Strategic Visibility?

Measure strategic visibility with coverage and freshness indicators: inventory completeness, ownership coverage, evidence availability, and time-to-answer governance questions. Add risk signals such as shadow-AI detection rate and high-impact workflow oversight coverage.

What Steps Enable Continuous AI Monitoring?

Enable continuous monitoring by instrumenting workflows to log model version, context, inputs, outputs, and outcome severity under a standard schema. Define thresholds that trigger review, route alerts to accountable owners, and verify the response loop through recurring control tests.

Which Tools Support Automated AI Observability and Compliance?

Select tools that unify telemetry across internal and vendor AI, support policy enforcement for sensitive-data handling, and provide searchable audit records. Prioritize integrations with incident management, role-based access, retention controls, and audit-ready export for examinations.

About Abstracta

With nearly 2 decades of experience and a global presence, Abstracta is a leading technology solutions company with offices in the United States, Canada, the United Kingdom, Chile, Colombia, and Uruguay. We specialize in AI-driven solutions development and end-to-end software testing services.

Our expertise spans across industries. We believe that actively bonding ties propels us further and helps us enhance our clients’ software. That’s why we’ve built robust partnerships with industry leaders, Microsoft, Datadog, Tricentis, Perforce BlazeMeter, Saucelabs, and PractiTest, to provide the latest in cutting-edge technology.

Embrace agility and cost-effectiveness through Abstracta quality solutions.

Contact us to discuss how we can help you grow your business.

Follow us on Linkedin & X to be part of our community!

Recommended for You

7 No-Code Automation Examples for Banking and Fintech

Tags In

Sofía Palamarchuk, Co-CEO at Abstracta

Related Posts

The Hidden Side of AI Adoption in Finance: “There’s No End-to-End Process Perspective”

Mario Ernst analyzes the structural mistakes that are leading financial institutions to accumulate proof-of-concept initiatives, isolated automations, and limited real impact from AI.

Testing Generative AI Applications

Testing generative AI applications requires proven expertise. Abstracta enables enterprise-ready adoption—cutting costs and boosting productivity at scale.

Search

Contents

Categories

- Acceptance testing

- Accessibility Testing

- AI

- API Testing

- Development

- DevOps

- Fintech

- Functional Software Testing

- Healthtech

- Mobile Testing

- Observability Testing

- Partners

- Performance Testing

- Press

- Quallity Engineering

- Security Testing

- Software Quality

- Software Testing

- Test Automation

- Testing Strategy

- Testing Tools

- Work Culture