Facing costly surprises from weak infrastructure testing? Validate for performance, continuity, and resilience—with Abstracta’s expert guidance for lasting impact.

In software development, starting off with a strong and reliable infrastructure isn’t just a plus; it’s absolutely necessary. This piece explores why testing your infrastructure thoroughly and validating it early matters so much.

Through our experiences, we’ve seen firsthand how understanding the intricate details of your system’s infrastructure thoroughly can significantly reduce risks and enhance performance. Using tools like Datadog takes this to the next level by offering a clear view of every component in real-time, making our testing strategies not just reactive, but proactive.

Here, we’re not only talking about learning from our mistakes; we’re focusing on avoiding them by adopting a comprehensive approach to infrastructure testing and validation.

Explore our innovative solutions!

Let’s unlock the full potential of your infrastructure testing, together.

Learning Through Experience

I’m a firm believer in learning from one’s mistakes. When you make one, and you are truly invested in what you do and strive to do it well, you naturally will want to analyze the mistake so you can learn how to avoid it in the future.

In this post, I want to share one such lesson. In more than one client project, the information we had about the infrastructure of the system was incorrect or didn’t paint the entire picture. So, when analyzing the results of our performance tests, there were things that just didn’t measure up.

If you’re familiar with our work or team, you know this mindset fuels us: we keep pushing until we’ve tackled every relevant problem with care. So, after a lot of research, we came to find that there was an extra component we were unaware of. And that the network path through which we were accessing a certain component was not as direct as we thought.

The two challenges were quite different, but the solution is the same: validate the testing infrastructure.

Dive into our case studies to see how we overcome our clients’ challenges with forward-thinking solutions that drive success.

What is Infrastructure Testing?

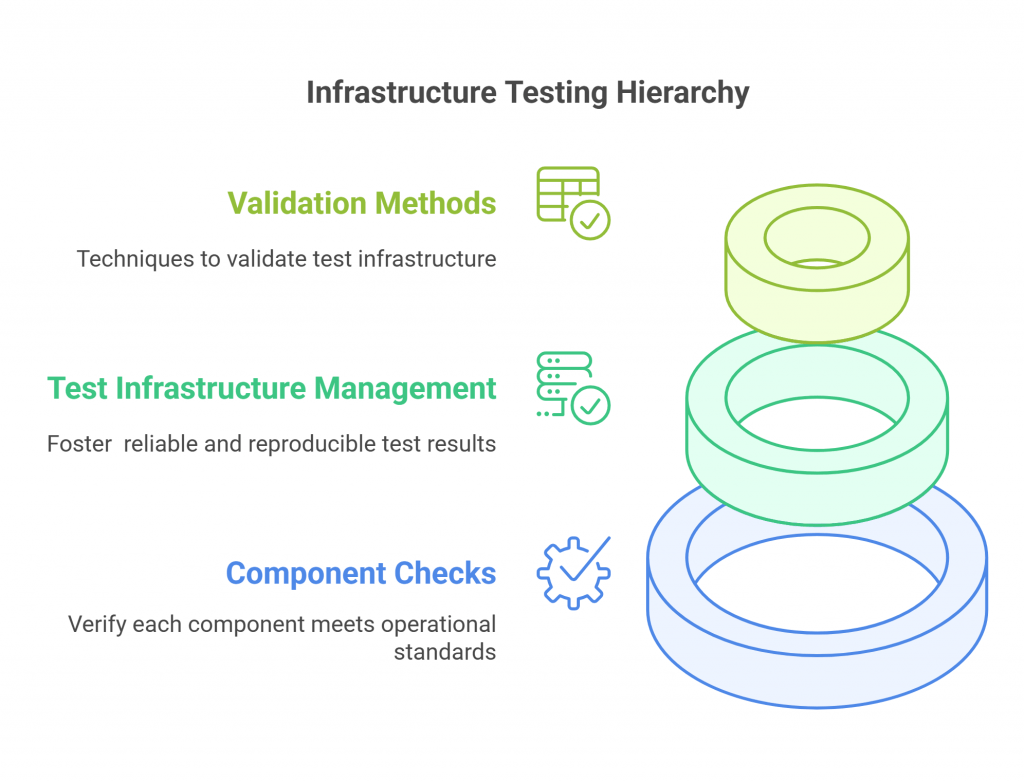

Infrastructure testing or infrastructure validation involves a series of checks and balances designed to confirm that every component within a network or system meets specific operational standards before full deployment. Effective test infrastructure management aims to ensure that any infrastructure tests performed will yield reliable and reproducible results.

This is a fact: some details may be forgotten when testing. That’s why it’s crucial to validate the test infrastructure before diving in. Fortunately, there are several ways to do so.

Ways to Validate Your Testing Architecture

By thoroughly examining various aspects of your infrastructure, you can identify potential issues before they impact your deployment. Below are some effective ways to validate your testing architecture and enhance the overall performance of your system.

1. Validate Components and Their Versions

Access each node by checking the IPs of the components and verifying that they have the indicated services. Validate the operating systems and verify their versions, as well as the versions of the components. For instance, Java, Apache, etc.

This meticulous verification boosts all system components to be up-to-date and compatible, reducing the risk of security vulnerabilities and performance issues.

2. Validate Initial Configurations

In performance tests, testers actively compare different configurations to seek optimizations and improve results. Here, functional testing (also known as manual testing) can be invaluable, allowing testers to explore configurations that automated tools might overlook.

So, to validate the accuracy of the documented results, it is necessary to review the initial configurations, especially the most relevant ones.

This involves examining the size of each connection pool (in the database or the web server), the maximum and minimum allocated memory (such as in the JVM), the test data used during the simulation, and so on.

3. Validate Connections and Network Routes

To do so, from each node, make a traceroute to the nodes with which they connect, to validate the network jumps. You should also do this from the load-generating machines.

This step is crucial for identifying and resolving potential network bottlenecks, fostering optimal data flow, and system responsiveness.

4. Validate Ports

I mention this in particular because it was what made us realize one of the problems we ran into. If you are accessing the web server on port 80 where there is a Tomcat, you should check that the Tomcat is configured in port 80.

What happened to us is that it was on port 8080, and this was because they had placed the Tomcat behind an Apache. This is a common practice*, but we weren’t made aware because we were later told, “The Apache is lightweight and does not add overhead.” Seriously!?

That is usually true, but it doesn’t mean that it won’t generate contention if something was configured wrong, as in this case. The number of connections it accepted was not enough for the load, so it would queue the requests. We were trying to understand why JMeter gave us certain results on the one hand, while in the Tomcat access logs, the times recorded in the time-taken were much smaller.

*Combining Tomcat and Apache has certain advantages. It should allow for greater concurrency management and resource optimization through compression and caching.

5. Support Test Continuity and Organizational Readiness

While version validation and configuration checks are essential, sustaining test continuity over time requires aligning both infrastructure and organizational practices.

A well designed test infrastructure goes beyond machines and services—it includes up-to-date documentation, accessible test libraries, and consistent test scripts that evolve with the system. These assets enable test teams to maintain rhythm across iterations and reduce the risk of fragmentation between cycles.

Creating the conditions for continuous testing also depends on having a test orchestration layer that connects infrastructure, tools, and processes. It’s not just about automation—it’s about making testing reliably available whenever needed. This includes planning for roles, environments, and shared responsibilities, so that testing begins smoothly, no matter where in the cycle you are.

When this continuity is missing, even small misalignments can disrupt insight generation and slow down the feedback loop.

6. Simulate Real-World Scenarios

Beyond technical verification, conducting full-system testing exercises allows teams to observe how all infrastructure components interact under stress or unexpected conditions. These tests help surface hidden issues—such as misconfigured file servers, failing dependencies, or asynchronous data paths—that may not be visible in isolated validations.

This approach can be especially valuable when simulating load over time, validating fallback mechanisms like a backup server, or observing how network infrastructure performs under varied traffic patterns.

In mobile testing, where real-world infrastructure is unavailable or too costly to use for testing, combining an in-house device lab with scalable cloud testing solutions provides flexibility. These hybrid approaches allow organizations to balance realism, speed, and cost across different testing phases.

Integrating Datadog for Streamlined Infrastructure Testing

Image: Federico Toledo with Lina Giraldo, our partner Manager de Datadog, enjoying the Dash Conf.

Datadog’s comprehensive monitoring capabilities offer invaluable insights into every layer of your technology stack during infrastructure testing. This real-time visibility helps in pinpointing performance issues and enabling all components to work seamlessly under varied conditions.

By leveraging Datadog, teams can detect anomalies, analyze system behavior, and track performance changes effectively.

Incorporating it early in the testing process allows for the establishment of baseline metrics and continuous monitoring of system adjustments. This proactive approach aids in identifying optimization opportunities based on accurate data, leading to more reliable system performance.

Not only does Datadog simplify the validation process, but it also promotes a collaborative environment for decision-making. Its detailed dashboards and reporting tools make it possible for performance insights to be easily accessible, facilitating swift and informed optimizations for robust system functionality.

Abstracta & Datadog Professional Services

Accelerate your cloud journey with confidence! We joined forces with Datadog to leverage real-time infrastructure monitoring services and security analysis solutions for modern applications.

Ready to optimize your cloud infrastructure and improve security? Book a Meeting!

Enhanced Validation Techniques for Testing Infrastructure

1. Automated Testing and Regression Testing

Automated testing and regression testing are key to maintaining robust infrastructure. They play a pivotal role in modern testing strategies.

Automated tests enable consistent test execution with minimal human intervention, enhancing the accuracy of test results and efficiency of the testing process.

To support these efforts, leveraging infrastructure testing tools designed specifically for such environments can further enhance test efficiency and accuracy.

Watch this Webinar about How to Use Automation to Increase Test Coverage!

2. Test Tools and Test Environments

Utilizing different test tools and establishing dedicated test environments helps simulate different user and system scenarios. This approach helps identify potential issues in software products before they impact the production environment.

By closely mirroring real-world conditions, this strategy boosts a thorough examination of the software, enhancing the detection of flaws or weaknesses. Consequently, it allows for the refinement and optimization of the product, safeguarding its performance and reliability in the live environment.

We invite you to discover our TOP 10 Performance Testing Tools.

3. Security and Load Testing

Regular security testing and load testing are crucial for validating the resilience and performance of infrastructure under stress. These tests assess the system’s ability to handle high traffic and detect vulnerabilities that could be exploited.

Furthermore, these testing practices enable organizations to fine-tune their systems, optimizing for both security and performance. By identifying and addressing these issues early, companies can prevent costly downtime and protect against data breaches, enabling a robust and reliable infrastructure.

Don’t miss our Continuous Performance Testing: A Comprehensive Guide!

Practical Considerations for Validating Test Infrastructure

To streamline robust testing environments, it is essential to perform infrastructure testing regularly.

Test infrastructure refers to the setup prepared to execute test cases that simulate user traffic and data processing on various components of the infrastructure. This includes not only virtual servers and test platforms but also hardware and software configurations that reflect the system’s real demands.

These environments must support different types of testing, like performance testing, stress testing, integration testing, and security testing, to assess the system’s performance and resilience under real-world conditions.

By doing so, teams can reduce the risk of late-stage surprises and gain confidence in the system’s readiness.

Key Components of a Reliable Testing Environment

Building stable testing environments requires meticulous planning and execution. Each test environment should ideally mirror the production environment to detect any potential disruptions in the real world. This includes replicating the network configuration, operating systems, and system architecture.

This alignment allows for identifying disruptions early, before they manifest in production. Incorporating test automation frameworks and aligning them with the intended architecture enables more reliable, repeatable results and helps avoid surprises during performance evaluations.

Integration into Development Processes

Effective infrastructure testing also involves the integration of testing processes into the software development lifecycle. Embedding testing from the start—covering infrastructure behavior under stress, in isolation, and during complex interactions—exposes flaws and hidden dependencies early on.

This integration supports continuous testing, facilitating faster iterations and reducing the chance of discovering infrastructure weaknesses only after deployment.

It also helps verify whether evolving infrastructure requirements, such as regulatory constraints or scalability needs, are being met in real-world conditions. When infrastructure testing is disconnected from development planning, even a single human error in environment setup or configuration can undermine test validity, delay releases, or obscure root causes during debugging.

Common Test Environment Issues

Even when a testing plan looks solid on paper, real-world challenges often emerge due to overlooked dependencies or environmental inconsistencies. Addressing these test environment issues proactively is key to a smooth testing experience.

Some of the most common issues include:

- Misconfigured proxy servers or mail servers that behave differently under test load.

- Excessive resource usage caused by outdated limits on CPU, memory, or connections.

- Gaps in coordination between the quality assurance team, infrastructure maintenance team, and system administrator team, leading to misalignment.

- Outdated or incompatible test automation frameworks that don’t reflect production behavior.

- Missing or insufficient data migration testing steps that compromise stability.

- Inadequate network-level testing, failing to account for latency or bottlenecks in distributed systems.

Maintaining infrastructure environments isn’t just about stability—it’s about aligning teams, tools, and environments with the evolving goals of the system. Each test should be scoped not only in technical terms but also organizationally, with shared accountability across roles.

How to Address These Issues Effectively:

To tackle these issues effectively, we recommend three guiding actions:

- Create early alignment between QA, DevOps, and sysadmin teams: Many issues come from not knowing who changed what. Simple shared checklists and regular syncs help uncover mismatches early.

- Add environment checks to your CI/CD pipelines: Before running any test, automatically verify key settings like open ports, correct versions, and network access. These quick checks can catch critical misconfigurations before they escalate.

- Monitor your test infrastructure in real time: Infrastructure-aware tools like Datadog help teams stay ahead of anomalies during execution. This helps you react fast to unexpected behaviors, especially during stress testing or data migration testing.

These steps help prevent surprises, streamline debugging, and create a feedback loop that continuously strengthens the test environment.

The Bottom Line – Infrastructure Testing

Testing is key in the lifecycle of infrastructure development and maintenance. By investing time in validating testing environments, organizations can save considerable resources and avoid the high costs associated with downtime or data breaches.

This is especially true when planning for complex scenarios that involve components such as the primary server, cloud services, and dependencies across hardware software layers. Each testing task must align with the broader system strategy to maintain coherence and enable stable environments that support consistent performance, even under pressure.

Real-world examples have shown us that understanding what’s happening beneath the surface often requires extra investigation and cross-checking.

On balance, it’s best to carry out certain validations before starting to analyze a system’s behavior, in order to provide a better service and not depend on the knowledge or transparency of the infrastructure that exists.

The Take-Home Message

Never underestimate the importance of thorough infrastructure testing. It is a critical step in verifying that the core technology of your business is robust enough to face upcoming challenges.

Don’t skip the part where you validate the testing infrastructure since at the end of the day, we’re seeking information to reduce risk.

How We Can Help You

With over 16 years of experience and a global presence, Abstracta is a leading technology solutions company with offices in the United States, Chile, Colombia, and Uruguay. We specialize in software development, AI-driven innovations & copilots, and end-to-end software testing services.

Our expertise spans across industries. We believe that actively bonding ties propels us further and helps us enhance our clients’ software. That’s why we’ve forged robust partnerships with industry leaders like Microsoft, Datadog, Tricentis, Perforce BlazeMeter, and Saucelabs, empowering us to incorporate cutting-edge technologies.

Need help with infrastructure testing? Embrace agility and cost-effectiveness through our tailored services and solutions!

Follow us on Linkedin & X to be part of our community!

Recommended for You

How to Do Performance Testing for Web Application?

Can Auto Playwright Boost Testing with AI?

API Performance Testing: Optimize Your User Experience

Federico Toledo, Chief Quality Officer at Abstracta

Related Posts

How to Run Video Streaming Performance Tests with the HLS Plugin for JMeter

Using open source tools to stress test video streaming at scale In this post, I want to share something that we have been working on with BlazeMeter for several years. In this project, we had the opportunity to contribute to the open source code of…

Processes, Procedures and Methodologies (PPM) = Quality

Guest post by Felipe Silva Difilippo, Former QA Leader at Verifone What does experience tell us? Even today there are companies that still remain resistant to use Processes, Procedures and Methodologies (PPM) to perform their jobs. It seems crazy, but it is true, and it’s…

Search

Contents