Have you ever wondered when is a good time to automate a test and what is automation testing mostly used for? Don’t miss this useful guideline for deciding if a test case is worth the time and energy of automating.

By Charles Rodriguez and Alejandro Berardinelli

“Let’s automate testing as much as possible.” That always sounds like a good idea, right? It’s the way the world is going in general, isn’t it? In software testing, automation can be a huge productivity enhancer as part of the software testing process, but not in all contexts.

In this post, we’ll present an approach to automated software testing with the aim of recognizing its feasibility according to the context of the project. It’s very useful for a software tester to understand when is a good time to automate a test.

Software testers should be mindful of how they can optimize their work, whether collaborating with other colleagues or developers and by encouraging themselves to try out test automation tools.

We’ll cover some concepts that are fundamental when you don’t have experience yet with test automation as well as evaluate their importance and benefits in relation to functional testing, also known as manual testing.

Don’t miss our Complete Guide to Automated Functional Testing!

What is Test Automation?

Historically, automation arose to reduce the human effort required in activities that could be replicated by a programmable system or machines. All this simplifies onerous, repetitive, or complex work, making it effective and more productive. This way, it’s possible to save energy, time, and costs, while freeing people up to focus on other tasks.

In software development, this practice can be approached in the same way by automating certain efforts that are done manually. The steps followed by humans are translated into repeatable scripts, so they can focus their energy on other specific tasks that provide greater value and reduce test execution times.

When To Use Automation Testing?

Common questions that occur when a tester thinks about automating are, “When is something automatable?”, “When we can use automation?” and, of course, “When is a good time to automate a test?.”

In some cases, automation allows us to run tests (sometimes repetitive tests) that a human could not, especially taking into account the limitation of the number of executions we can do in a certain period.

There are several things to consider when determining whether something should be automated and can help us achieve better test coverage. Some of them are the potential investment, approach, benefits, and current knowledge of the manual testing process.

We first have to fully understand and become experts in the manual process, and only then can it be possible to automate. Complete knowledge of this process is the pillar of this path. Of course, all this implies that manual testing is not completely substitutable. There is often a debate about the impending death of manual testing. Just as it is said, “It simply cannot die!”.

In actual fact, you have to be highly skilled in your manual testing before you can automate it. Learn how to walk, then run.

Debunking Myths About Automated Testing

Automation has its advantages and disadvantages, depending on the project, time, cost, quality, and methodology. It’s crucial to understand the context and that everything you do is based on fulfilling the objectives in the best possible way, selecting and applying the appropriate methods, tools, and skills.

Common myths about test automation:

❌You can automate everything.

❌Automating always leads to better software quality.

❌Automated testing is better than manual.

❌Automating brings a rapid return on investment.

Of course, there may be times when one of these myths is actually true, but it would be an exception to the rule.

Some Principles

In the Context-Driven Testing school, there exist seven principles that help to understand the goal of testing, whether it be manual or automated.

-

The value of any practice depends on its context.

-

There are good practices in context, but there are no “good practices”.

-

People, working together, is the most important part of the context of any project.

-

Projects are not static and often take unpredictable paths.

-

The product is a solution. If the problem is not solved, the product will not work.

-

Good software testing is a challenging intellectual process.

-

Only through judgment and skill, practiced cooperatively throughout the project, will we be able to do the right things at the right time to test our products effectively.

Cem Kaner, James Bach, and Brett Pettichord were those who proposed these principles. They presented them in their book “Lessons Learned in Software Testing: A Context-Driven Approach” which helps us to grasp the importance of the ability to adapt according to the current project situation.

Manual Testing vs Automated Testing

When getting started, we might want to automate everything, but the cost of developing and maintaining the test scripts for automated tests is not something to take lightly.

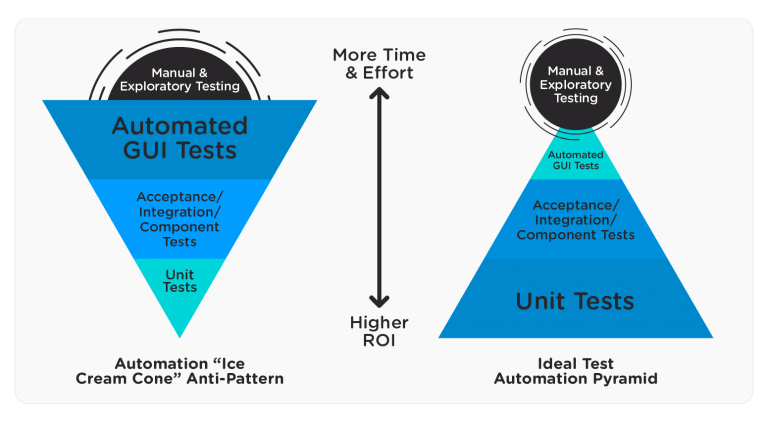

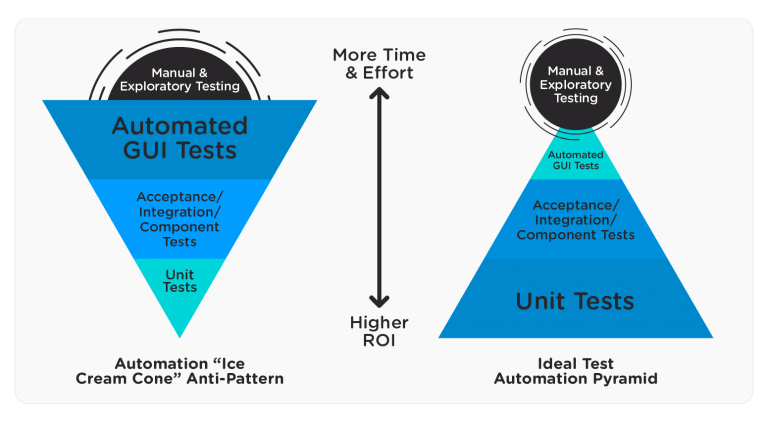

When a project bets on automation, ideally it should have a solid base starting with the unit test cases, preventing as many bugs as possible with immediate feedback, and successively continuing to the different layers. This way, manual and exploratory testing are most valuable at the UI level, focusing on those that are not possible to automate.

This concept is explained by Michael Cohen’s test automation pyramid:

On the left, we see how automation is commonly done and on the right, we can see the ideal way, where unit tests carry the most weight in the pyramid.

Although there are differences between automated and manual testing, they aren’t mutually exclusive, but are seen as complementary tasks in the search for better software quality.

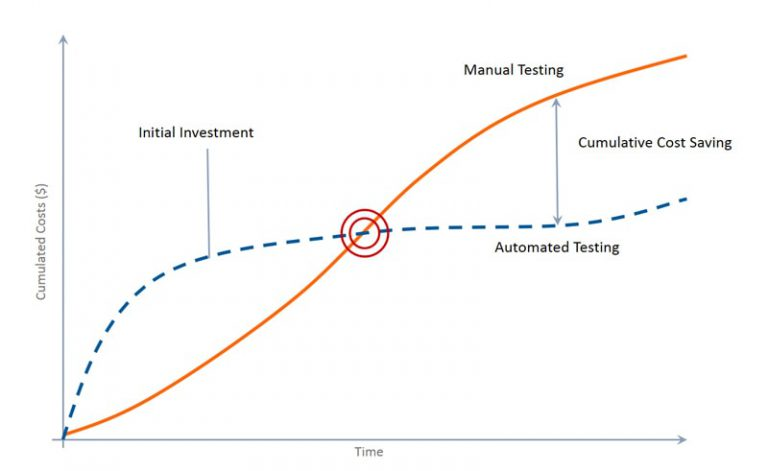

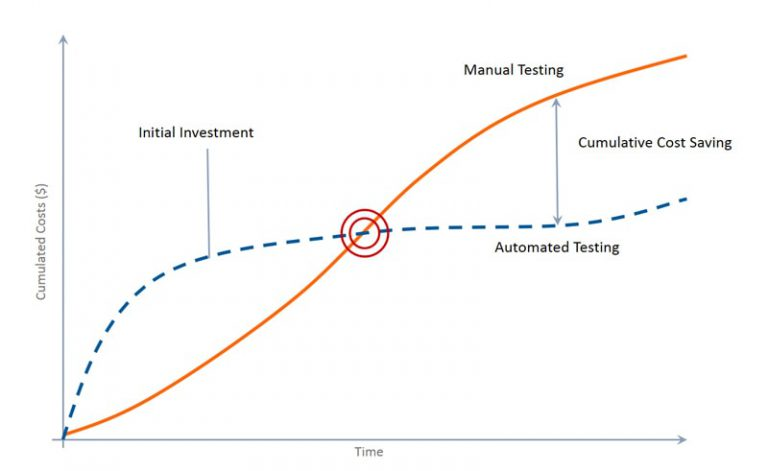

If we think about the return on investment of testing, testing a new functionality manually allows you to quickly know more about the application at a low cost. As knowledge is acquired, the inventory of tests increases, and consequently, the cost also increases for manual tests.

On the other hand, automation has a higher initial cost which decreases as it progresses. This behavior can be seen in the following graph:

Analyzing this, we see that automation has a high initial investment up to the “breaking point”. That’s when we begin to see the positive impact it generates on long-term costs compared to manual testing. On the whole, we can assess that both activities of testing are fully compatible and generate short and long term benefits.

Would you like to know to dive into the differences between automated testing and functional testing? You can do it here.

What to Automate?

Now that we are aware of the importance and benefits of test automation, we have to identify the cases that we can automate. For this, we must take into account the objective that we are pursuing and at what level, as we saw in the Cohn pyramid.

What’s the Objective?

The first thing we are looking for is to always aim for a higher level of software quality and analyze if automation “fits” within the project. To answer the question, we recommend to carry out a feasibility analysis in relation to the objectives.

The following scenarios are some in which it will most likely make sense to automate when we are analyzing when is a good time to automate a test:

-

There’s technical debt to eliminate.

-

Regression tests are time-consuming.

-

The project is highly complex and long-term.

Dive into the benefits of automated testing in this article!

Which Test Cases Should We Automate?

What Percentage of Functional Testing Should be Automated? As we have seen, not everything is automatable in context. That is why it is relevant to know which cases are suitable for our purpose.

Thinking at the code level and on the developer side, unit tests are the easiest for which to make a script. On the tester side, we usually work on automating the regression cases at the UI and API level, focusing first on the most critical and complex flows.

The following are those test cases that can be automated:

Regression Tests

Given the situation in which we already have a defined test suite that must be executed periodically after each product release, the effort to run them manually becomes repetitive. It also takes time away from other tasks that are not automatable and where we can provide more value.

These regression test cases are highly automatable, being particularly convenient to integrate within a CI/CD model. This adds value in terms of the cost and time that we can gain performing other tasks. All this since we can run the test scripts unattended while performing other activities.

Find out how to automate regression testing in this article!

High-Risk Tests

The stakeholders usually agree upon these cases, placing emphasis on checking the high-priority and critical functions. If these functions fail, they greatly affect the business model. This is why people call this approach “Risk-based Testing”.

If we automate the cases that test these functionalities, we can find, almost immediately after each release, the incidents requiring quick action and potentially block the production of said release.

Complex and Time-Consuming Tests

There may be cases in a project that are too complex to reproduce manually. So if we take it to a script, it will be easier to execute them in an automated way.

If it’s a form with a lot of data, perhaps the tester is more error-prone, especially if he or she has to test the same form with many variants of data. We can reduce the probability of error by automating.

Repetitive Test Cases

Just as regression testing becomes a repetitive task, we might encounter specific cases where automation is advantageous. For instance, manually testing a large amount of data for the same flow could take a considerable amount of time and if we have to repeat it, the task becomes somewhat tedious.

If we automate this flow, however, we could parameterize this data and eliminate the need for manual testing of each value. This is also known as data-driven testing, where we parameterize an automated test and feed it with data from a source, such as a file or a database.

Keep learning about which types of tests can be automated in this article.

Tool Selection

Now that we know what to automate, we can move on to selecting the tool we are going to use. This activity can be among the most intricate to analyze initially due to the multitude of available tools. Nevertheless, we must take into account the project, budget, knowledge, and experience of the participants.

There exist several open-source, commercial, and custom tools that differ in their limitations and potential. To choose the right tool, we must clearly understand the requirements that we need to fulfill in order to continue the cost-benefit analysis of its utilization.

At Abstracta, there is a wide variety of tools that we select according to the context of the project. We often use Selenium, Appium, Cucumber, Ghost Inspector, and GXtest due to the flexibility that they provide.

Our Favorite Test Automation Tools

-

Selenium: An open-source tool, it’s widely accepted around the world for testing web applications on different browsers and platforms.

-

Appium: Another open-source framework (based on Selenium) that you can use to automate tests mainly on mobile devices for iOS and Android.

-

Cucumber: This tool is part of the BDD (Behavior Driven Development) approach. Cucumber’s main advantage is its ease of use, since it’s very intuitive, provides a wide variety of features, and is also open-source.

-

Ghost Inspector: The most remarkable thing about this tool is that it allows us to automate without knowing how to code, which makes it great for beginners. On the other hand, this tool is commercial and only allows 100 free executions per month. See our full review of Ghost Inspector.

-

GXtest: At Abstracta, we developed GXtest to enable automation for applications developed with Genexus in a simple way (the only one of its kind). It also allows for integrating tests in a CI/CD model.

It’s important to acknowledge that there are no universal best tools. Yes, we can choose between those that offer us more flexibility, but the choice will always depend on the application under test and the decision-making criteria.

Conclusion

In general, we can find different ways to guide our test automation efforts, but the most important thing is to have a well-defined purpose and objective.

The context of the application under test is no minor detail. We should know when is a good time to automate a test. Not everything can be automated, since the return on investment comes from the work of a robust feasibility analysis.

If automation takes place, we highly recommend implementing it at all levels and putting greater effort into the lower levels, such as unit and API tests, and not only at the UI level.

By considering the above, we can prevent a greater number of bugs and accompany the “manual testing“. This requires the ability of the software testers, and thus avoid the excessive load of repetitive tasks that can be programmed.

In need of help with Test Automation Services? Don’t miss this article! Raising the Level: Getting Started With a Software Testing Partner.

We are quality partners! Learn more about our solutions here! Contact us to discuss how we can help you grow your business.

Tags In

Charles Rodriguez

Related Posts

Automated Functional Testing Guide

Learn all about automated functional testing. This guide highlights challenges, strategies, and essential tools, emphasizing the synergy between functional and automated testing. Step in and enhance your processes and results. By Federico Toledo and Matías Fornara In this guide, we’ll delve deep into the who,…

Understanding the Importance of Cross-Browser Testing for Modern Web Apps

In this guest post, Alexandra McPeak from SmartBear explains cross-browser testing and why it matters When it comes to consuming web content, there are more choices than ever to enjoy your own unique experience. However, this can easily be overlooked in software development. The biggest…

Search

Contents

Categories

- Acceptance testing

- Accessibility Testing

- AI

- API Testing

- Development

- DevOps

- Fintech

- Functional Software Testing

- Healthtech

- Mobile Testing

- Observability Testing

- Partners

- Performance Testing

- Press

- Quallity Engineering

- Security Testing

- Software Quality

- Software Testing

- Test Automation

- Testing Strategy

- Testing Tools

- Work Culture